Most extraction pipelines look fine in demos. They break quietly on page 47 of a 200-page invoice, dropping rows and consolidating line items nobody asked them to consolidate. By the time anyone notices, the numbers have already traveled downstream into a payment run, a compliance report, or an audit file.

The core problem is structural: single-pass extraction has no accountability loop. The model extracts once and ships whatever it produced. No verification, no reconciliation against document totals, and no way to flag what got dropped. The output looks complete because nothing in the pipeline knows what "complete" means.

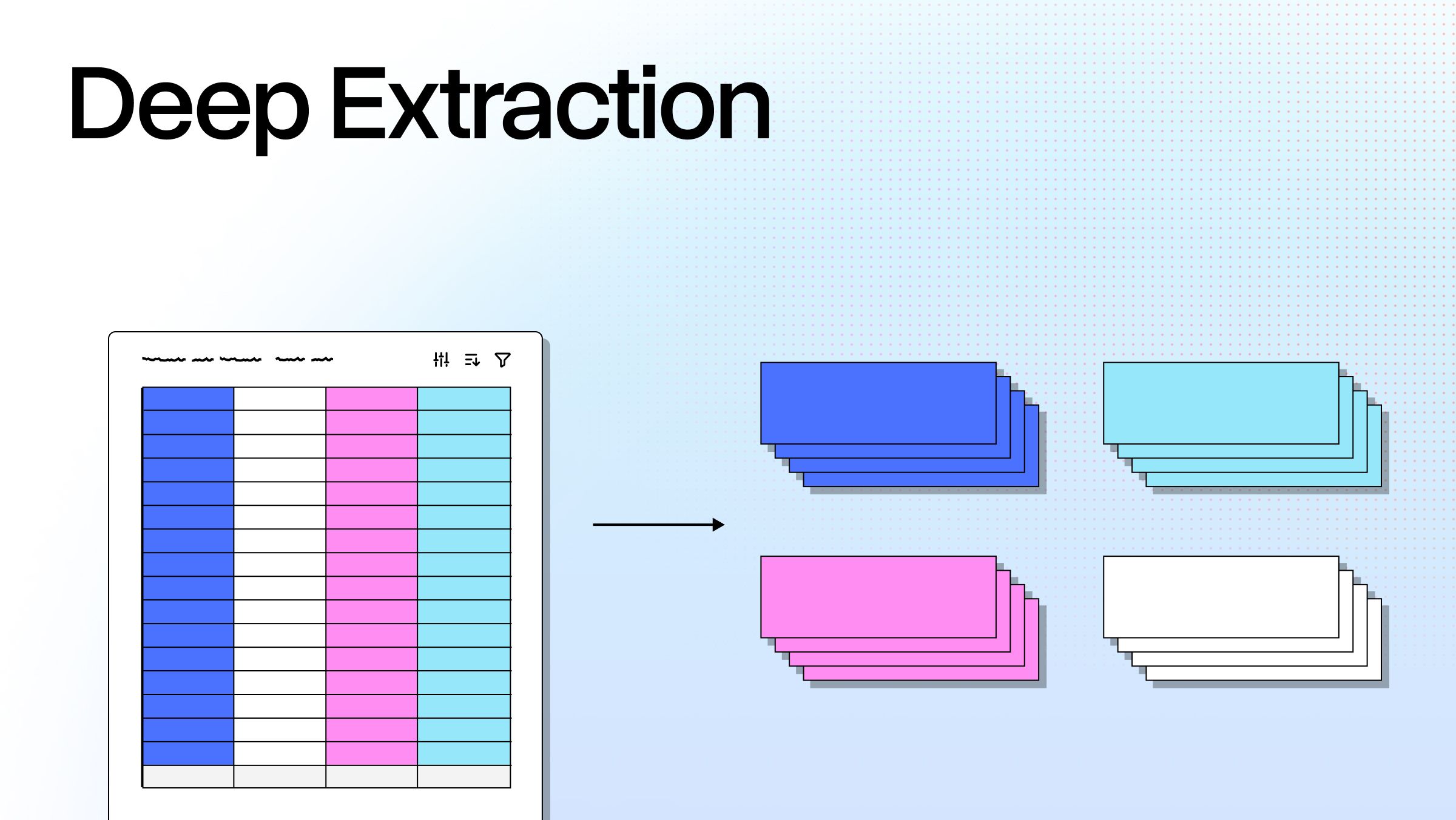

Deep extraction fixes this through an iterative, agent-driven approach that extracts, verifies output against the source document, identifies gaps or inconsistencies, and re-extracts until the output meets a defined quality threshold. The architecture enforces accountability in a way single-pass simply doesn't.

When you’re dealing with high-stakes documents that distinction matters. Going from 10-20% field accuracy with a frontier model to 99-100% with an agentic verification loop isn't incremental. That's a categorically different result: the difference between a demo that works and a pipeline that holds up in production.

Why Extraction Pipelines Break on Real-World Documents

Single-pass document extraction has one fundamental blind spot: it has no mechanism to catch its own errors. If the model skips a row, nothing flags it. The pipeline moves on.

This failure mode is predictable. Language models take shortcuts on long, repetitive tasks. Given 1,000 line items across a 500-page fund statement, they stop short, consolidate entries, or silently drop records. Attention at scale degrades. This is what that looks like. The model treats the document as something to summarize, not something to audit.

Complex documents make it worse. Multi-column layouts, nested tables, footnotes that modify values on other pages, embedded images containing data: each is a failure surface that single-pass handles inconsistently. The model gets most of it right, most of the time. Production workflows need better odds than that.

OCR accuracy and extraction completeness are also two separate problems. Most pipelines only solve the first. Reading the text correctly is step one. Verifying that extracted values are complete, consistent, and reconcilable against document-level totals is the harder step, and it's the one that determines whether downstream systems can trust the output.

The traditional response was human review. That works at low volume. Financial services teams processing hundreds of brokerage statements per week, or insurance carriers handling thousands of claims monthly, can't have every extraction pass through a human checkpoint. The errors don't go away. They get absorbed by the review queue until the backlog becomes the bottleneck.

That's what makes the pipeline architecture itself the variable worth changing.

What Deep Extraction Actually Is

Deep extraction is an agentic loop that extracts, verifies against the source document, identifies gaps or inconsistencies, and re-extracts until output meets a defined quality threshold.

The architecture is fundamentally different from a single-pass approach. Sub-agents handle separate document components (line items, header fields, totals, embedded tables) rather than a single model ingesting the entire document in one pass. A verification agent then checks the assembled output against the source before anything is returned. If the invoice total doesn't reconcile with the line item sum, the system knows. It re-extracts the affected section instead of moving on.

Vision language models (VLMs) are central to making this work on real documents. They're what distinguishes modern agentic OCR from both traditional OCR and standard LLM extraction. Multi-modal understanding lets the system read tables, charts, and embedded images that text-only extraction misses entirely. A single-pass pipeline might pull clean text from a document and miss the chart on page 12 containing the actual performance figures. A VLM-equipped pipeline sees that chart as data, not decoration.

Deep learning-based models alone aren't enough without an orchestration layer on top. A powerful model that extracts once and returns is still a single-pass system. The verification loop is what converts extraction into a process you can hold accountable.

How you define "correct" is a design decision worth getting right. Verification criteria can be explicit (totals must reconcile, schema must validate, required fields must be present) or inferred from document context by the agent itself. The strictness you apply should match the stakes of the downstream workflow.

When You Actually Need Deep Extraction

High-stakes documents make the case: financial statements, legal contracts, insurance claims, regulatory filings. Any document with more than 50 line items or cross-document reconciliation requirements. Any workflow where a dropped field has downstream consequences: payments that don't process, compliance checks that fail, audits that surface errors months later. Invoice processing automation is one of the most common first deployments, but the pattern holds across any high-stakes document workflow. If manual review is currently your backstop, that's the signal.

When Standard Extraction Is Enough

Short, structured documents with consistent layouts (standard forms, simple invoices) don't need the overhead of an agentic loop. Workflows where ~95% field accuracy is acceptable, with human spot-checks covering the rest, don't need deep extraction. The investment in agentic verification is justified by the cost of errors, not the volume of documents.

The Architecture Behind Accurate Agentic Document Processing

Breaking document processing into verifiable units is the key architectural move in agentic document processing. It’s what separates deep extraction from standard single-pass pipelines. Rather than feeding a model the entire document and hoping it produces a complete output, a deep extraction pipeline assigns each component to a sub-agent optimized for that task. Line items, header fields, totals, and embedded tables are extracted separately, then assembled and verified as a whole.

In the verification loop extracted output is compared against the source document. Discrepancies (a line item count that doesn't match the document's own summary, a field value that doesn't appear in the cited section) trigger targeted re-extraction rather than full reprocessing. The system addresses the specific gap without reprocessing content it already got right.

Citations and bounding boxes are the verifiability layer that makes this production-ready. Every extracted field maps to a specific location in the source document. This granular field provenance enables document understanding at the audit level, not just at the output level. An adjuster reviewing a claims extraction can trace any value directly to the source. A compliance team can prove exactly what the extraction system saw and when.

Production-ready pipelines need more than accuracy. They need explainability: confidence scores that signal which fields are certain and which warrant review, source citations that ground every output in the source material, and human-in-the-loop (HITL) touchpoints for regulated workflows where full automation isn't permitted.

The cost-versus-accuracy tradeoff is frequently misframed. Agentic extraction does more work per document than a single pass. Measured against manual review on a 500-page fund statement, it's faster and cheaper at scale. The relevant comparison isn't "deep extraction vs. single-pass extraction." It's "deep extraction vs. the headcount currently reviewing what single-pass gets wrong."

Where Traditional OCR and Single-Pass Extraction Fall Short

Optical character recognition extracts text from document images. It doesn't verify whether extracted values are complete, consistent, or reconciled against document-level totals. That's a design constraint, not a fixable limitation. Traditional OCR was built to convert image to text, not to evaluate whether the resulting text is correct.

Traditional OCR is also layout-fragile. Rotate the document, change the column order, use a non-standard table format, and accuracy drops. Tesseract and similar tools perform well on clean, consistent inputs. They degrade on the irregular documents that actually show up in enterprise workflows: agricultural invoices with handwritten annotations, multi-format brokerage statements, legal filings with embedded exhibits. In any document-heavy industry, these aren't edge cases. They're the norm.

Single-pass LLM extraction inherits the verification problem and adds its own. The model gets the whole document, extracts what it can in one pass, and returns without a loop or a check. It can produce plausible-looking output that's missing fields, has consolidated entries the document didn't consolidate, or contains values that appear nowhere in the source. The output looks reasonable. The errors stay invisible until they surface downstream.

LlamaParse addresses this at the architecture level. Agentic orchestration selects the best model for each document component (traditional OCR, a vision language model, or an LLM) depending on what that component requires. Verification loops run before any output is returned. When document formats change, no retraining is required because the orchestration layer adapts. A cost optimizer routes each element to the most efficient model for the task, which adds up at scale.

Multi-modal understanding handles what pure text recognition can't: charts, embedded images, and tables with merged cells.

Deep Extraction in Production: Where Field Accuracy Becomes Non-Negotiable

The use cases where deep extraction pays off share three characteristics: high field count, high accuracy requirement, and an audit trail that traces back to the source.

Financial services is the clearest case. Brokerage statements and fund reports require every transaction line to reconcile. A dropped row means a reporting error that flows directly into straight-through processing rates, which is what finance operations teams actually track for automation ROI.

Insurance presents the same requirement from a different angle. Claims processing extracts values that feed directly into downstream approvals. When an extracted figure is wrong, the approval is wrong. Citations let adjusters trace any field back to the source document, which matters for accuracy and for the regulatory requirement to document decision inputs.

Legal workflows need the full audit trail: contract extraction with field-level provenance, version-consistent output across large document sets, and traceable citations for review workflows and regulatory discovery.

Government and agriculture work with some of the most irregular document formats around. Rigid, template-based extraction fails on these reliably. County payment reports, cattle sales invoices, and agricultural permit applications aren't structured PDFs with predictable layouts. An orchestration layer that adapts to format variation handles them where a fixed template breaks.

The difference between 10-20% field accuracy on a single-pass frontier model and 99-100% with an agentic extraction loop is the difference between a partial answer and a different category of solution.

Getting Deep Extraction Right Without Building It From Scratch

Building an agentic verification loop in-house is possible. It's also a substantial engineering investment before you've processed a single production document. You need to design sub-agent orchestration, define verification criteria, build re-extraction trigger logic, instrument for HITL workflows, and then maintain all of it as document formats evolve. Teams that have done this describe it as building a product within a product.

LlamaExtract provides schema-based deep extraction with agentic verification built in. Define your schema, set your verification criteria, and the pipeline handles the loop. No training required to get started on your first document type, and no retraining required when formats change, because the orchestration layer adapts.

LlamaParse is the parsing foundation that LlamaExtract builds on. Parsing and extraction serve different functions in a document pipeline, and both are required for deep extraction to work. Before extraction can verify anything, the document needs to be converted accurately. LlamaParse's layout-aware computer vision and multi-modal processing handle that at the input stage, selecting the best combination of OCR, VLMs, and LLMs per document component. The parsed output is what the extraction layer verifies against.

As document complexity increases and regulatory requirements around auditability tighten, single-pass extraction becomes untenable for high-stakes workflows. The question stops being "can the model read this document" and becomes "can the model read it, verify what it read, and prove it."

If your current pipeline relies on a single pass and human review to catch what it misses, LlamaExtract is worth testing against your actual documents.