Greetings, LlamaIndex community!

We’re excited to introduce our new blog series, the LlamaIndex Update. Recognizing the fast pace of our open-source project, this series will serve as your continual guide, tracking the latest advancements in features, webinars, hackathons, and community events.

Our goal is simple: to keep you updated, engaged, and inspired. Whether you’re a long-time contributor or a new joiner, these updates will help you stay in sync with our progress.

So, let’s explore the recent happenings in our premier edition of the LlamaIndex Update.

Features And Integrations:

- LLMs with Knowledge Graphs, supported by NebulaGraph. This new stack enables unique retrieval-augmented generation techniques. Our Knowledge Graph index introduces a GraphStore abstraction, complementing our existing data store types. Docs, Tweet

- Better LLM app UX supports in-line citations of its sources, enhancing interpretability and traceability. Our new

CitationQueryEngineenables these citations and ensures they correspond with retrieved documents. This feature marks a leap towards improving transparency in LlamaIndex applications. Docs, Tweet - LlamaIndex integrates with Microsoft Guidance to ensure structured outputs from LLMs. It allows direct prompting of JSON keys and facilitates the conversion of Pydantic objects into the Guidance format, enhancing structured interactions. It can be used independently or with the SubQuestionQueryEngine. Docs, Tweet

- The GuidelineEvaluator module allows users to set text guidelines, thereby aiding in the evaluation of LLM-generated text responses. This paves the way toward automated error correction capabilities. Notebook, Tweet

- We now include a simple

OpenAIAgent, offering an agent interface capable of sequential tool use and async callbacks. This integration was made possible with the help of the OpenAI function API and the LangChain abstractions. Tweet OpenAIPydanticProgramin LlamaIndex enhances structured output extraction. This standalone module allows any LLM input to be converted into a Pydantic object, providing a streamlined approach to data structuring. Docs, Tweet- We now incorporate the FLARE technique for a knowledge-augmented long-form generation. FLARE uses iterative retrieval to construct extended content, deciding to perform retrieval with each sentence. Unlike conventional vector index methods, our FLARE implementation builds a template iteratively, filling gaps with retrieval for more pertinent responses. Please note, this is a beta feature and works best with GPT-4. Docs, Tweet

- We now employ the Maximal Marginal Relevance (MMR) algorithm to enhance diversity and minimize redundancy in retrieved results. This technique measures the similarity between a candidate document and the query while minimizing similarity with previous documents, depending on a user-specified threshold. Please note that careful calibration is necessary to ensure that increased diversity doesn’t introduce irrelevant context. The threshold value is key to balancing diversity and relevance. Docs, Tweet

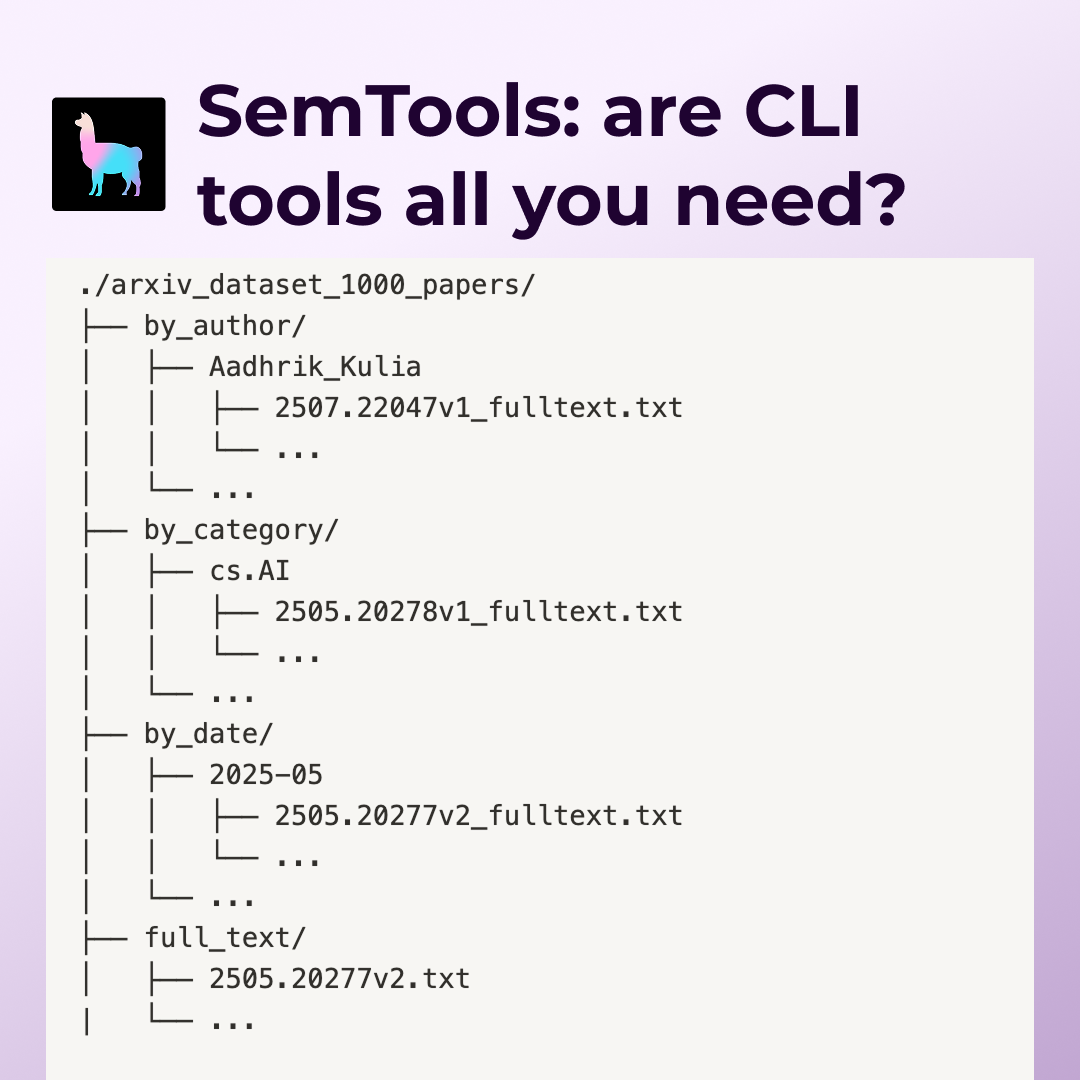

- We now support recursive Pydantic objects for complex schema extraction. This enhancement, inspired by parsing directory trees, employs a mix of recursive (Node) and non-recursive (DirectoryTree) Pydantic models, facilitating more sophisticated agent-tool interactions. Tweet

- We have developed agents that can perform advanced query planning over data using the Function API and Pydantic. These agents input a full Pydantic graph in the function signature of a query plan tool, which is then executed. This system can work with any tool and has the potential to construct complex query plans. However, it has limitations like difficulty in producing deep nesting and the possibility of outputting invalid responses. Docs, Tweet

- `OpenAIAgent` is capable of advanced data retrieval and analysis, such as auto-vector database retrieval and joint text-to-SQL and semantic search. We have also built a query plan tool interface that allows the agent to generate structured/nested query plans, which can then be executed against any set of tools, enabling advanced reasoning and analysis. Docs: OpenAI Agent + Query Engine, Retrieval Augmented OpenAI Agent, OpenAI Agent Query Planning. Tweet

- The new multi-router feature allows for QA over complex data collections, where answers may be spread across multiple sources. It uses a “MultiSelector” object to select relevant choices given a query. The router can pick up to a maximum number of choices. It can use either a raw LLM completion API or the OpenAI Function API. If the Function API is used, schema validity can be enforced. A simple usage example involves a RouterQueryEngine, where the PydanticMultiSelector selects the relevant vector and keyword index to synthesize an answer. Docs, Tweet

- We have made a significant upgrade to our token tracking feature. Users can now easily track prompt, completion, and embedding tokens through the platform’s callback handler. The upgrade aims to make token counting more efficient and user-friendly. Docs, Tweet

- We released a guide that demonstrates how to build a custom retriever that combines vector similarity search with knowledge graphs in LLM RAG systems. It involves constructing a vector index and a knowledge graph index and combining the results from both during query time. This method can improve results by providing additional context for entities. However, it may lead to a slight increase in latency. Docs, Tweet

- In an LLM workflow, managing large amounts of data, including PDFs, agent Tools, SQL table schemas, etc., requires efficient indexing. To handle this, we introduce our Object Index, a wrapper over our existing index data structures. This allows any object to be converted into an indexable text format, providing a unified interface that enhances the functionality of our indices over various data types. Tweet

- The OpenBB Finance Terminal is a great platform for investment research and is completely open-source. It now includes a feature called AskOBB, powered by Llama Index, which allows users to easily access any financial data through natural language. Tweet

- The TruLens team has introduced tracing for LlamaIndex-based LLM applications in its latest release. This new feature allows developers to evaluate and track their experiments more efficiently. It automatically evaluates various components of the application stack, including app inputs and outputs, LLM calls, retrieved-context chunks from an index, and latency. This is part of an ongoing collaboration between the LlamaIndex and TruLens teams to improve the development, evaluation, and iteration of LLM apps. Notebook, Blogpost

- Prem App has successfully integrated with Llama Index, enhancing privacy in AI development. This union allows developers to connect custom data sources to large language models easily, simplifying data ingestion, indexing, and querying. To use this integration, download the Prem App and connect your data sources through the Llama Index platform. This allows for efficient data management and boosts AI application development, providing developers with more control and flexibility. Notebook, Blogpost

- We now enable the extraction of tabular data frames from unstructured text. This feature, powered by the OpenAI Function API and Pydantic models, simplifies text-to-SQL or text-to-DF conversions within structured data workflows. Note that effective use may require significant prompt optimization. Docs, Tweet

Tutorials:

- James Brigg’s tutorial on using LlamaIndex with Pinecone.

- Jerry Liu's tutorial on using LlamaIndex with Weaviate.

- Sophia Yang tutorial on LlamaIndex overview, Use cases, and integration with LangChain.

- Anil Chandra Naidu is building a course on LlamaIndex. The course presently covers topics such as introduction, fundamentals, and data connectors.

- OpenAI cookbook by Simon on how to perform financial analysis with LlamaIndex.

Webinars And Podcasts:

- Webinar on Demonstrate-Search-Predict (DSP) with Omar Khattab.

- Webinar on Practical challenges of building a Legal Chatbot over your PDFs with Sam Yu

- MaML podcast with Jerry Liu.

Hackathons:

The LlamaIndex team has presented at the UC Berkeley Hackathon and the Stellaris VP Hackathon in India. The community has warmly welcomed LlamaIndex, and teams at these hackathons have developed intriguing use cases — Customer support during emergency cases, Understanding Legal documents.

Events:

- Jerry Liu spoke on Building and troubleshooting an AI Search & Retrieval System at Arize — LlamaIndex event.

- Ravi Theja presented about LlamaIndex and its applications at Together in India.

That’s all for this edition of the LlamaIndex Update. We hope you found this information useful and are as excited as we are about the progress we’re making. We’re grateful for the continued support and contributions from our community. Remember, your feedback and suggestions are invaluable to us, so don’t hesitate to reach out.

Stay tuned for our next update, where we’ll share more exciting developments from the LlamaIndex project. Until then, happy indexing!